How Synthetic Identity Fraud Bypasses Traditional KYC

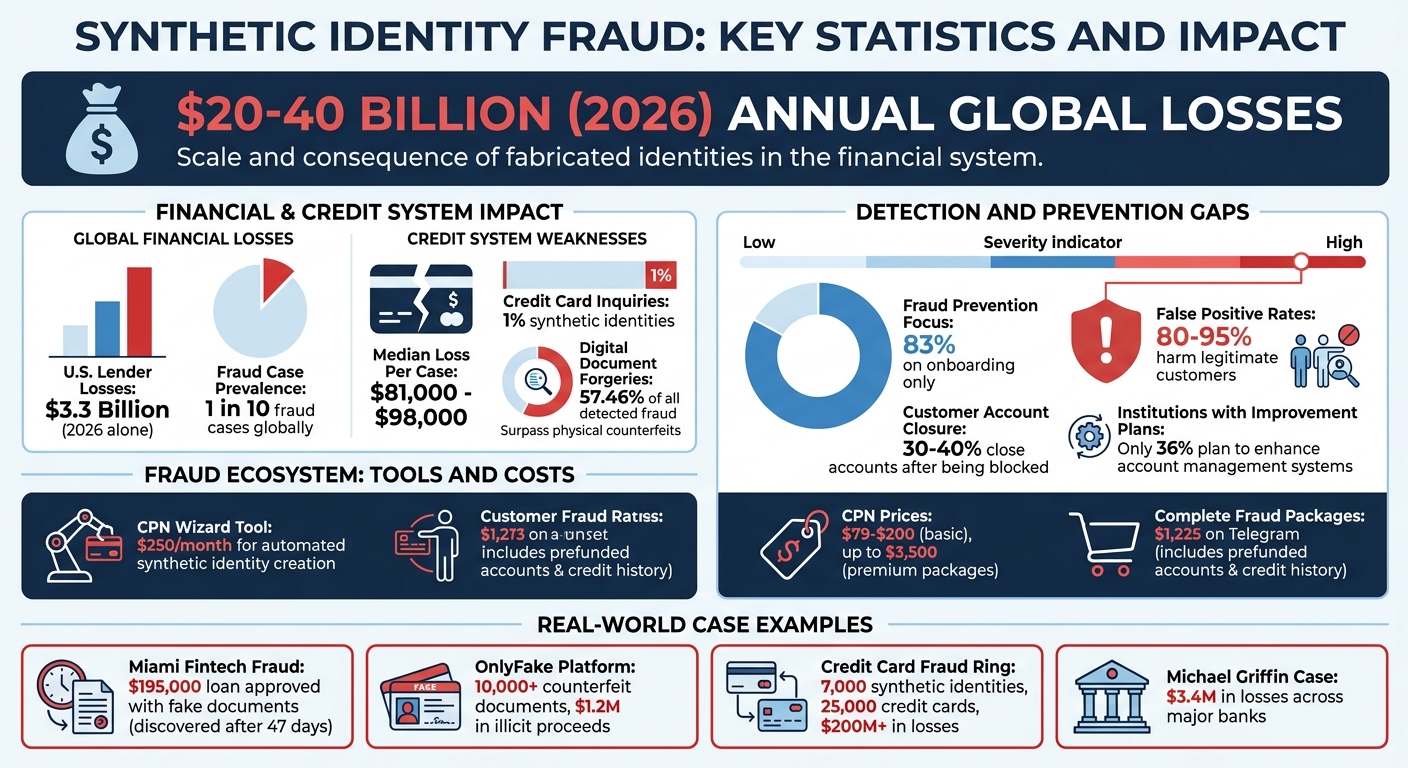

Synthetic identity fraud is costing billions globally and exploiting weaknesses in verification systems. Fraudsters combine real and fake data, like Social Security Numbers (SSNs) with fabricated names, to create identities that pass verification checks. These identities build credit histories over months, then execute fraud schemes, causing losses of $20–$40 billion annually.

Key Issues:

- Generative AI makes creating fake identities faster and cheaper.

- Fraudsters use tools like "CPN Wizard" ($250/month) to automate fake identity creation.

- Weaknesses in KYC systems: Individual data points are verified, not the identity as a whole.

- Biometric checks fail against deepfakes and injection attacks.

Impacts:

- U.S. lenders lost $3.3 billion in 2026 alone.

- Synthetic identities account for 1 in 10 fraud cases globally.

- High false-positive rates (80–95%) harm legitimate customers, with 30–40% closing accounts after being blocked.

Solutions:

- AI tools analyze patterns across data points and detect synthetic identities.

- Features like forensic pixel analysis, metadata checks, and behavioral analytics improve fraud detection without slowing onboarding.

- APIs allow easy integration into existing KYC systems.

Synthetic fraud thrives on outdated systems, but AI-driven solutions can help financial institutions reduce losses and protect customers.

Synthetic Identity Fraud Statistics and Impact on Financial Systems

How Fraudsters Create and Use Synthetic Identities

Techniques for Creating Synthetic Identities

Fraudsters craft synthetic identities by blending real data, like Social Security Numbers (SSNs), with fake details such as names, addresses, and birth dates. This combination allows each piece of information to pass individual verification checks, even though the overall identity is fabricated.

Generative AI has made this process even more efficient. In January 2025, a tool called "CPN Wizard" emerged on Telegram, automating the creation of synthetic identities. For $250 per month, this software scans databases for unused SSNs, inserts fake identities into public records, and links these profiles to credit monitoring services for real-time credit score tracking [4]. Fraudsters also use AI to mass-produce deepfake headshots and voice clones, integrating these tools with Credit Privacy Number (CPN) creation to bypass biometric security checks.

CPNs are a key part of this scheme. These nine-digit numbers, formatted like SSNs, can be either stolen from government-issued records or pulled from unused SSNs found in databases. Credit repair scammers sell CPNs for prices ranging from $79 to $200, with premium packages going as high as $3,500 [4]. The danger lies in the fact that many buyers don’t realize they’re engaging in illegal activity.

"The problem with CPNs is that there are some people who truly have no idea that they're committing a crime. They're being solicited by their friends on social media... It's the kind of crime your friendly neighbor might do and not even realize it." [4]

- Steve Lenderman, Head of Fraud Prevention at Isolved

How Fraudsters Use Synthetic Identities in Financial Systems

Once fraudsters create synthetic identities, they integrate them into financial systems to establish credibility. These techniques set the stage for exploiting financial institutions on a large scale.

The process begins by building a credit history. Fraudsters open small accounts, such as prepaid phone plans, retail credit cards, or minor credit lines, to create a financial footprint. They make small purchases and pay off balances on time, aging the synthetic identity until it appears legitimate. This method takes advantage of credit reporting rules, where even a declined application can trigger the creation of a new credit profile.

Eventually, these fraudsters exploit the credit histories they’ve built. They max out credit lines and disappear. In May 2026, reports revealed that North Korean hackers used synthetic identities to fund weapons programs. They scraped photos from social media, created deepfake headshots using face-swapping tools, and even forged passports. These fake identities allowed them to secure remote jobs at global companies, funneling salaries back to the North Korean government [4].

The fraud ecosystem has become so advanced that complete packages - including prefunded bank accounts and established credit histories - are now openly sold on platforms like Telegram for around $1,225 [4]. This "Fraud-as-a-Service" model has turned synthetic identity fraud into a scalable operation for organized crime groups and even nation-states.

These methods highlight the vulnerabilities in traditional document-based Know Your Customer (KYC) processes, emphasizing the need for AI-powered solutions to combat this growing threat.

sbb-itb-5d40823

Synthetic Identities: When Data Becomes a Persona

Where Traditional KYC Systems Fail

Traditional Know Your Customer (KYC) processes were built for a time when forging documents required specialized skills and expensive equipment. But with generative AI making it easy to create convincing fakes at almost no cost, these systems are struggling to keep up. This shift has exposed major flaws in how financial institutions verify their customers' identities.

Problems with Document-Based Verification

Most KYC systems rely on Optical Character Recognition (OCR) and template matching to validate documents. They check fonts, layouts, and security features. The issue? AI-generated documents can now pass these checks effortlessly. Unlike altered copies, these documents are created as seamless, coherent wholes. As Fabio Ugolini, CEO of TrueScreen, puts it: "The system has no way of knowing that the document never existed physically" [2].

Take this shocking example: in January 2026, a Miami fintech approved a $195,000 loan based entirely on fake documents. These included AI-generated incorporation articles, balance sheets, and even a deepfake driver’s license. The fraud was only uncovered 47 days later [6]. Today, digital document forgeries account for 57.46% of all detected fraud, surpassing physical counterfeits for the first time in history [6].

Even biometric checks aren't foolproof. Standard liveness tests confirm a human is present but fail to ensure the person matches the document. Fraudsters exploit this by using injection attacks - feeding synthetic video directly into the KYC system without a camera. This has led to a rise in biometric deepfakes and similar attacks [2].

These vulnerabilities at the document level are further compounded by how traditional systems handle data verification.

Insufficient Data Validation and Cross-Referencing

The problem goes beyond forged documents. Weaknesses in data validation allow synthetic identities to flourish. Traditional systems verify data points like names, Social Security Numbers (SSNs), and dates of birth individually, rather than as a cohesive whole. Fraudsters take advantage of this by mixing real SSNs with made-up names and addresses. Each data point checks out, but the combined identity is entirely fabricated.

Henry Patishman, Executive VP at Regula, explains the issue clearly:

"Fraudsters assemble identities so that every individual signal passes independently, and traditional systems rarely evaluate how those signals relate to each other. That's really the crux of the problem."

- Henry Patishman [1]

The U.S. credit system unintentionally makes this problem worse. When someone applies for credit with a new SSN combination, credit bureaus automatically create a new profile. This gives synthetic identities an instant "proof of existence" just for applying. Currently, synthetic identities account for about 1% of all credit card application inquiries in the U.S., with median losses per case ranging from $81,000 to $98,000 [4][5].

Adding to the challenge, financial institutions often focus 83% of their fraud prevention efforts on onboarding. Once an identity passes the initial check, it’s treated as legitimate, leaving a blind spot for synthetic identities. Over months or years, these identities build credibility before executing a "bust-out" scheme - maxing out credit lines and vanishing [7].

Dependence on Outdated Systems

Many financial institutions still rely on KYC systems designed for manual document review and physical records. These outdated systems are ill-equipped to detect AI-generated artifacts. They don’t analyze pixel noise patterns, check metadata for inconsistencies, or verify camera sensor data (EXIF information) - all of which can reveal AI-generated content.

The 2011 randomization of Social Security Numbers further complicates things by removing geographic validation, making it easier to fabricate identities. Additionally, many systems can’t cross-reference SSNs with government databases in real time. As a result, global losses to synthetic identity theft are projected to reach between $20 billion and $40 billion annually by 2026 [4].

Despite these staggering numbers, only 36% of financial institutions have plans to improve their account management systems to catch synthetic identities already present in their portfolios [7]. This reactive approach leaves them vulnerable to fraud rings that quietly build credit histories before striking.

These shortcomings highlight the pressing need for advanced, AI-driven solutions to strengthen KYC systems and address these vulnerabilities effectively.

Examples of KYC Failures in Practice

The following examples highlight how synthetic identity fraud exploits weaknesses in KYC processes. These cases show the costly consequences of such vulnerabilities, emphasizing the importance of adopting advanced verification solutions.

Case Study: How Synthetic Identities Breached a Bank's KYC System

Between 2017 and 2023, a fraudster known as "Jane Doe" manipulated KYC weaknesses to operate across 19 financial institutions using 14 different Social Security Numbers. She secured a $21,000 PPP loan and close to $100,000 in car loans, even after multiple arrests. Her extensive pattern of over 50 applications, half of which were fraudulent, revealed serious flaws in identity verification systems [9].

Another striking case involved Yurii Nazarenko, alias "John Wick", who pleaded guilty in March 2026 for running OnlyFake, an AI-based platform that generated over 10,000 counterfeit documents. Between May and June 2024, undercover FBI agents used the platform to acquire fake New York driver’s licenses and U.S. passports. These fake documents bypassed KYC checks at financial institutions and cryptocurrency exchanges, leading to $1.2 million in illicit proceeds, which Nazarenko later forfeited [8].

These examples demonstrate not only the sophistication of synthetic fraud but also its far-reaching impact on financial systems and consumers.

How Synthetic Fraud Affects Consumers and Businesses

The implications of synthetic fraud are severe, as shown in numerous cases. In December 2021, Michael Griffin was sentenced to 100 months in prison for heading a synthetic identity fraud ring. His group used stolen Social Security Numbers and fake police reports to defraud major banks, causing more than $3.4 million in losses. Griffin was also ordered to pay $412,885.17 in restitution [10].

In another case, 18 conspirators used over 7,000 synthetic identities to acquire 25,000 credit cards, resulting in losses exceeding $200 million [11]. These incidents highlight how synthetic fraud has grown from isolated scams to large-scale, organized operations that exploit systemic vulnerabilities in KYC processes.

For businesses, the fallout includes increased manual review workloads, heightened regulatory oversight, and even personal liability for AML officers under regulations such as AMLD6 [3]. For consumers, especially minors, elderly individuals, or deceased persons whose Social Security Numbers are used without their knowledge, the effects can be devastating. Fraud often goes unnoticed for years, damaging credit histories and leading to long-term financial and legal challenges [3][11].

AI-Powered Solutions to Detect and Prevent Synthetic Identity Fraud

AI is transforming how we tackle synthetic identity fraud by addressing the gaps left by traditional KYC (Know Your Customer) methods. Unlike rule-based systems, AI evaluates interconnected patterns, metadata, and contextual signals, making it possible to detect fraudulent identities at scale.

How AI Improves Fraud Detection

AI stands out by analyzing the relationships between signals rather than treating data points in isolation. For instance:

- Forensic Pixel Analysis: AI can distinguish between real camera noise and the overly smooth textures generated by AI models. Real cameras produce random, hardware-specific patterns, while synthetic images lack this randomness.

- Metadata and EXIF Verification: AI flags images without essential camera sensor data, a common sign of fabrication.

- Injection Attack Detection: Fraudsters often bypass physical cameras by inserting synthetic videos directly into KYC systems. AI can identify these attempts, completing its analysis across multiple detection layers in just 3 seconds [5]. This speed enables real-time fraud prevention.

These capabilities form the backbone of advanced AI fraud prevention tools.

Features of Effective AI Fraud Prevention Tools

The best AI fraud prevention systems share several essential features:

- Certification at the Source: This method captures documents and selfies with forensic metadata at the moment of creation, such as GPS coordinates, device fingerprints, and network signatures. A cryptographic seal is applied immediately, shifting the focus from detecting fakes to verifying authenticity [2][3].

- Cross-Document Consistency Checks: AI cross-references information across multiple documents - like IDs, payslips, and utility bills - to detect discrepancies. For example, one retail bank reduced synthetic onboarding attempts by 70% after adopting certified capture technology [3].

- Behavioral and Contextual Analytics: AI establishes profiles of legitimate user behavior, such as typing patterns, navigation habits, and device posture. Deviations from these baselines can indicate synthetic identities.

- Human-in-the-Loop (HITL) Systems: AI surfaces anomalies for human experts to evaluate, especially in ambiguous cases [12].

Here’s a comparison of fraud prevention approaches:

| Approach | Effectiveness on Synthetics | User Friction | Evidentiary Value |

|---|---|---|---|

| OCR + Classic Liveness | Low | Low | Limited |

| AI-based Deepfake Detection | Medium (declining vs. new models) | Low | Limited |

| Multimodal Biometrics | Medium-High | Medium-High | Medium |

| Certification at the Source | High and stable | Low | High (QTSP seal) |

By combining these features, AI systems significantly raise the cost and complexity of fraud attempts without adding unnecessary friction for legitimate users.

How to Implement AI Solutions in Your KYC System

You don’t need to rebuild your entire KYC system to integrate AI. Instead, use APIs to enhance your existing processes. These APIs can deliver signed, traceable digital objects, making integration straightforward and typically achievable within weeks [2][3].

Start with a phased approach. Focus on high-impact areas like mobile onboarding or credit applications to measure results before scaling up [12]. Ensure that your integration captures environmental metadata - such as device fingerprints, GPS coordinates, and network signatures - at the moment of document capture. This step addresses vulnerabilities in traditional KYC systems [3].

"The controls need to focus on raising the cost of fraudulent attempts, not just adding friction. It's smart verification that raises the cost of fraud for criminals, not slower onboarding."

- Henry Patishman, Executive VP of Identity Verification Solutions at Regula [1]

For compliance and transparency, ensure your AI tools provide clear outputs and documented reasoning for alerts, which is critical for satisfying auditors and adhering to regulations like AMLD6 [12]. Dormant accounts, often targeted by fraudsters, should have their identity context refreshed upon reactivation rather than relying on outdated data [13].

TamperCheck offers $5 in free credits to test document forensic capabilities without requiring an initial contract [5]. This allows businesses to evaluate the technology firsthand before committing.

Conclusion: Strengthening KYC to Combat Synthetic Fraud

Key Takeaways

Synthetic identity fraud presents a tough challenge for traditional KYC methods. Fraudsters create profiles where every individual data point - like a name, Social Security number, or birth date - seems valid when viewed separately [1]. Weaknesses in current KYC systems include reliance on document-based verification methods like OCR and template matching, which struggle to differentiate between AI-generated documents and real ones [2][5]. Liveness checks can confirm a person is physically present but often fail to verify if the individual matches the documents provided. Another issue arises with credit bureau on-ramps, which can unintentionally validate synthetic identities during their first inquiry, granting them a false "proof of existence" [4]. With global losses from synthetic fraud estimated between $20 billion and $40 billion annually and synthetic identities implicated in 1 out of every 10 fraud cases worldwide, the financial consequences are immense [4].

This highlights the pressing need to revamp KYC processes to better address these vulnerabilities.

Next Steps

Take a close look at your current KYC system to pinpoint weaknesses. Are you verifying data points in isolation? Relying on OCR alone for document checks? Or leaving room for injection attacks to bypass liveness detection? If so, it’s time to act. Implement AI-driven tools that analyze interconnected patterns across multiple data points, especially in high-risk areas like mobile onboarding or credit applications. You don’t need to overhaul your entire system - API-based solutions can integrate seamlessly in just weeks [2][3]. For example, DidIBuyIt offers tools to verify transaction authenticity and strengthen fraud prevention, helping your KYC processes stay ahead of synthetic identity fraud and avoid costly losses.

FAQs

How is a synthetic identity different from identity theft?

A synthetic identity combines real stolen data with made-up or AI-generated details to create a completely fake persona. On the other hand, identity theft involves directly stealing and using someone’s actual identity and personal information. Synthetic identities can often slip past traditional KYC (Know Your Customer) checks because they appear authentic, while identity theft targets and misuses the credentials of a real individual.

What are the biggest signs my KYC process is vulnerable to synthetic fraud?

Your KYC process could be at risk of synthetic fraud if it depends heavily on static, one-time data such as identity documents or personal identifiers without incorporating real-time verification. Warning signs include struggling to identify AI-generated identities that mix stolen and fabricated information, as well as missing key verification techniques like forensic metadata analysis. Additionally, frequent false positives or delays in uncovering fraudulent activity may indicate weaknesses in ongoing risk assessment practices.

What’s the fastest way to add AI fraud detection without slowing onboarding?

The fastest way to bring AI-driven fraud detection into the onboarding process without causing delays is by verifying documents, selfies, and customer communications as they’re generated. Tools such as electronic seals, qualified timestamps, and forensic metadata enable AI to validate identity authenticity instantly. By integrating these steps into current workflows, businesses can achieve quick, smooth verification while keeping fraud prevention measures robust.